SEO Experiments That Actually Move Rankings (2026): 25 Tests + Tracking Template

Most teams ship changes, refresh a page, add links, and hope the algorithm “likes it.” That’s not a test. Real SEO experiments are controlled, documented, and measured, so you can prove what moved rankings, clicks, and conversions. This guide is a practical playbook for running SEO experiments in 2026 without risking your traffic: what to test, how to track outcomes, how to avoid false positives, and how to turn results into repeatable wins. If you’ve tried “SEO tests” before and felt unsure what worked, these SEO experiments will fix that.

Before you run SEO experiments, set a baseline. You’ll get cleaner “before” data with a structured audit and a consistent reporting workflow. Use these internal resources as your foundation: advanced SEO audit checklist, SEO KPI dashboard, and the best SEO report template.

1) What are SEO experiments? (and why most fail)

SEO experiments are controlled tests where you change one meaningful variable and measure whether that change caused a lift (or drop) in outcomes like clicks, CTR, rankings, conversions, or indexation. They can be page-level “SEO case tests” (one page vs its baseline), group tests (batch of similar pages vs control batch), or “SEO split testing” where you compare matched page groups that share intent and similar baseline performance.

The reason SEO experiments feel hard is that search results are noisy. Rankings fluctuate, competitors publish, Google tests SERP layouts, and user behavior changes. Your job isn’t to eliminate noise—it’s to design SEO tests that still deliver a clear answer.

Why most SEO experiments fail

- No isolation: changing titles, headings, internal links, and templates all at once makes results impossible to attribute.

- Weak measurement: tracking only rankings, ignoring clicks, CTR, and conversions—so “wins” don’t translate to outcomes.

- Bad page selection: mixing different intents (informational + transactional) or different authority levels.

- Wrong time window: calling a result too early or comparing mismatched date ranges.

- No documentation: without an annotation log, you forget exactly what changed and when—then repeat mistakes.

Experiment checklist (quick pre-flight)

- Define one variable you’re testing (one change).

- Pick a matched set of pages (same intent, similar baseline).

- Choose primary + secondary KPIs (and where to measure them).

- Set a time window and decision rule (ship / iterate / rollback).

- Write a rollback plan before you ship anything.

- Log the exact change with a timestamp in your annotation log.

2) The 3 rules of valid SEO experiments (isolation, measurement, time window)

Great SEO experiments follow three rules. If you learn nothing else, learn these. They’re the difference between a real test and a random site update.

Rule #1: Isolation (one variable that matters)

Isolation means one meaningful lever per experiment: title format, internal linking pattern, schema type, page module order, crawl rule, or content section. In real SEO experiments, “one lever” can still include small supportive tweaks (like updating a subheading to match a new title), but the core variable must remain the focus.

Example: A CTR test changes titles only. It does not also rewrite introductions, add new internal links, and change schema.

Rule #2: Measurement (KPI + diagnostics)

Measurement means you track a primary KPI and supporting diagnostics. A CTR experiment’s primary KPI is CTR and clicks in GSC. Diagnostics include impressions, query mix, and (optionally) GA4 engagement. Use Google’s title link guidance to avoid patterns that lead to title rewrites or mismatched snippets during SEO tests.

Rule #3: Time window (long enough for signal)

Your time window is your commitment to not overreact. Many SEO experiments “look good” after 2 days and reverse by day 14. Commit to a minimum test duration based on the experiment type (CTR, linking, content refresh, technical, programmatic).

Common mistakes that ruin SEO experiments

- Testing on pages with different intent or different templates.

- Rolling out sitewide changes without a control group or staged batching.

- Calling winners based on one keyword or one day.

- Ignoring seasonality, promotions, holidays, or news spikes.

- Not checking indexation/crawl signals after technical SEO experiments.

- Changing navigation or internal linking globally during a CTR test.

3) SEO experiment framework (Hypothesis → Change → Control → Measure → Learn)

A simple framework makes SEO experiments repeatable. It also makes them easier to explain to stakeholders: you’re not “doing SEO,” you’re running structured SEO tests to prove what works and what doesn’t.

How to pick experiments that are worth your time

Start with constraints. Every site has a bottleneck. The goal of SEO experiments is to identify your biggest constraint, then test the highest-leverage fix.

Common constraints: high impressions, low clicks pages not indexed weak internal links stale content slow UX thin pSEO pages

If you need help choosing experiments from keywords and intent clusters, use the free keyword research template to map opportunities to page groups.

4) Setup: tracking stack (GSC, GA4, rank tracker, log/notes)

Strong SEO experiments start with clean tracking. You don’t need enterprise tooling to run SEO tests, but you do need a consistent process—especially for annotations and comparisons.

Minimum tracking stack

- Google Search Console (GSC): query + page performance, impressions, CTR, position, index coverage.

- GA4: engagement + conversions, so you can connect SEO tests to outcomes (leads, sales, signups).

- Rank tracker: directional monitoring for a stable keyword set (use as a sensor, not a verdict).

- Experiment log: your annotation log for what changed, where, why, who approved, and when.

What to log for every experiment

- Experiment name: consistent naming (e.g.,

CTR_Title_Format_ServicePages). - Page list: URLs, grouped by test vs control.

- Baseline window: last 28 days (or relevant period).

- Go-live timestamp: exact date/time (important for diagnosing weird spikes).

- Rollback trigger: define your “stop loss.”

Want a standardized workflow? Use the SEO KPI dashboard plus the SEO report template so every test is measured the same way. For tooling comparisons, see best all-in-one SEO tools.

5) KPI checklist (rankings, clicks, CTR, impressions, conversions, indexation)

The KPI you choose should match the experiment type. CTR-focused SEO experiments should be judged by CTR and clicks. Internal linking SEO tests often show up first in impressions and query breadth. Technical SEO experiments often move crawl and indexation signals before traffic moves.

| KPI | Best for | Where to measure | Decision hint |

|---|---|---|---|

| CTR | Titles/snippets, SERP alignment | GSC (query + page) | Look for sustained lift, not one-day spikes |

| Clicks | Meaningful traffic impact | GSC + GA4 landing pages | Confirm clicks increased without impressions dropping |

| Impressions | Coverage + ranking breadth | GSC | Often moves before clicks on content refresh |

| Average position | Directional ranking shift | GSC (use ranges) | Use ranges; avoid obsessing over decimals |

| Conversions | Business outcomes | GA4 (events), CRM if available | Small traffic lifts can still be big conversion wins |

| Indexation / crawl | Technical SEO experiments | GSC + logs, sitemap status | Check coverage + discovery; validate with crawling patterns |

How to choose the “primary” KPI for SEO experiments

- CTR test: primary = CTR, secondary = clicks + conversions.

- Internal linking test: primary = clicks, secondary = impressions + position.

- Content refresh test: primary = clicks (or conversions), secondary = impressions + CTR.

- Schema test: primary = CTR/clicks (if snippet changes), secondary = impressions + coverage.

- Technical SEO test: primary = indexation/crawl signals, secondary = traffic/conversions over time.

- Programmatic SEO test: primary = indexation quality + clicks per page, secondary = conversions.

6) 25 high-impact SEO experiments (grouped by category)

Here are 25 SEO experiments that consistently produce measurable outcomes. Each one includes what to change, what to track, and what a “good signal” usually looks like. These are practical SEO tests (not theory), designed to be safe and repeatable.

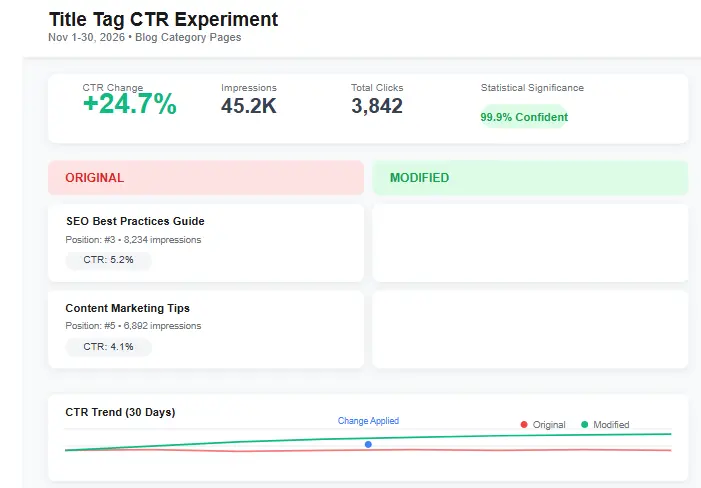

Titles/CTR experiments (fast signal)

- Value-first titles: move the core benefit to the front. Track CTR + clicks in GSC by page.

- Intent match rewrite: mirror the language of top-ranking results without copying. Track CTR and query mix.

- Reduce truncation: shorten titles that cut off meaning. Track CTR improvements for high-impression queries.

- Specificity test: add numbers/timeframes when relevant. Track CTR + conversions (GA4).

- Snippet alignment intro: rewrite first 2–3 lines to reinforce the title promise. Track CTR + engagement.

CTA guardrail for CTR SEO split testing: follow Google’s title link guidance to avoid patterns that cause unpredictable rewrites.

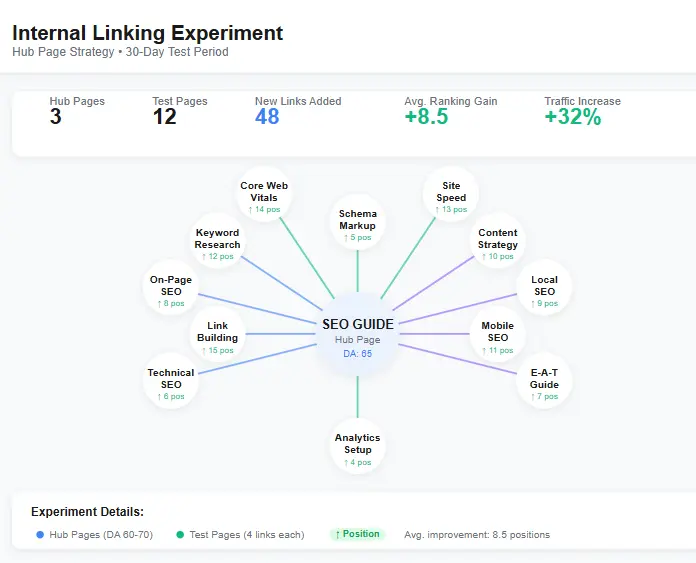

Internal linking experiments (compounding wins)

- Hub → spoke boost: add contextual links from a hub to 10–20 related pages. Track impressions + clicks growth.

- Link module test: add “related pages” module near mid-content. Track clicks + pages/session in GA4.

- Anchor clarity upgrade: change vague anchors to descriptive anchors. Track query expansion + rankings distribution.

- Reduce link depth: add links from high-authority pages to priority pages. Track crawl frequency + impressions.

To standardize internal linking SEO experiments, use the internal linking template.

Content refresh experiments (stability + growth)

- Freshness update: refresh stats, screenshots, and examples; add “Updated in 2026” note. Track impressions → clicks.

- Missing subtopic expansion: add a focused H2 section for gaps. Track long-tail impressions and clicks.

- Above-the-fold rewrite: tighten first 200 words + add bullets. Track CTR + engagement.

- Consolidate cannibal pages: merge overlapping articles into one stronger page. Track total clicks and ranking stability.

Schema/structured data experiments (better eligibility)

- FAQ schema (eligible only): add to pages where it matches content naturally. Track CTR + impressions changes.

- Structured data validation cleanup: remove invalid/duplicate markup. Track coverage and indexing stability.

- Organization/author clarity: strengthen trust signals. Track branded queries and click consistency.

- Breadcrumb alignment: make breadcrumb schema consistent sitewide. Track snippet clarity + CTR for category pages.

Reference for schema experiments: Intro to structured data.

Technical SEO experiments (crawl, index, speed)

- Fix indexation leaks: address canonical confusion and duplicate signals. Track coverage + indexation rate.

- Sitemap segmentation: split sitemaps by type (posts, pages, products). Track discovery and indexation improvements.

- CWV focus test: improve Core Web Vitals on top 20 landing pages. Track conversions + engagement shifts.

- Redirect chain removal: simplify internal paths and reduce hops. Track crawl efficiency and load time.

Technical reference: Google sitemap overview.

Programmatic SEO experiments (scale without thin content)

- Unique value block: add a “tool output / comparison / local insight” section to pSEO pages. Track CTR + conversions.

- Template order test: move the best answer above the fold. Track CTR + engagement.

- Indexation batching: publish in batches, validate coverage before scaling. Track indexation quality.

- Automated internal linking rules: add related links with strict relevance rules. Track impressions breadth.

7) How to design an experiment (sample templates)

The easiest way to improve SEO experiments is to standardize how you write them. Clear briefs prevent scope creep (“let’s change one more thing”), and they make reporting easy. Use the templates below (also included in the tracker).

8) Timeframes + how to avoid false positives

The most expensive error in SEO experiments is false certainty. A “winner” that was actually noise can waste months when scaled. Use time windows that match the system you’re influencing (SERP behavior vs crawling vs indexation vs content evaluation).

Recommended time windows

- CTR/title tests: 14–28 days (often fastest)

- Internal linking tests: 21–56 days (crawl + re-evaluation time)

- Content refresh tests: 28–90 days (impressions often lead, clicks follow)

- Technical SEO experiments: 7–42 days for crawl/index signals, longer for traffic impact

- Programmatic SEO experiments: 30–120+ days (batching helps reduce risk)

Common mistakes

- Comparing different date ranges (e.g., this month vs last month with different seasonality).

- Mixing page types (category pages and blog posts) in one SEO test.

- Changing more than one module during the experiment window.

- Not tracking conversions—so you optimize clicks and lose revenue.

- Scaling a “winner” without rerunning the test on a second batch.

9) Reporting: experiment results dashboard (simple table + narrative)

Reporting turns SEO experiments into organizational knowledge. A simple dashboard plus a short narrative is enough. Keep it readable: what changed, what happened, why it likely happened, what you’ll do next.

| Experiment | Pages | Primary KPI | Baseline | Result | Decision | Notes |

|---|---|---|---|---|---|---|

| Title rewrite (CTR) | 20 test / 20 control | CTR | 2.8% | 3.4% (+21%) | Ship | Stable lift across multiple queries |

| Hub linking | 12 target pages | Clicks | 1,240 | 1,410 (+14%) | Iterate | Add 2 more hubs; tighten anchors |

| Sitemap segmentation | All new pages | Indexation rate | 68% | 79% (+11 pts) | Ship | Cleaner discovery; fewer “discovered not indexed” |

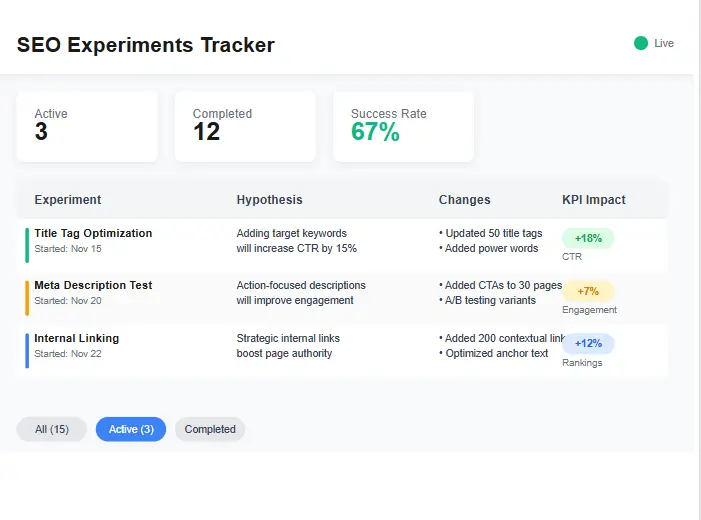

10) Download section (lead magnet) + what’s inside the tracker

To run SEO experiments consistently, you need a system. The tracker is that system: it forces isolation, measurement, and time windows. It also protects you from repeating failed SEO tests six months later because you forgot what happened.

If your experiments start from messy keyword targeting, your results will be messy too. Use the free keyword research template to build consistent page groups. For internal linking scale tests, use the internal linking template.

11) FAQ

How many pages do I need for SEO experiments?

Are rankings the best KPI for SEO experiments?

Can SEO split testing hurt my site?

What’s the fastest category of SEO experiments?

How do I avoid false positives in SEO experiments?

Do I need to submit sitemaps for technical SEO experiments?

Should I run on-page experiments on money pages first?

What’s a good success threshold for SEO experiments?

How do I document SEO case tests so they’re reusable?

How do I select a proper control group?

What if Google rewrites my title during a CTR test?

How do SEO experiments connect to E-E-A-T and trust?

12) Conclusion + CTA

Teams that win long-term don’t “guess better.” They run better SEO experiments. When you isolate one variable, measure the right KPI, and commit to a clear time window, your SEO experiments become a reliable growth engine—especially when you stack CTR wins, internal linking lifts, content refresh gains, and carefully scoped technical SEO experiments.

If you only do one thing after reading this guide, do this: run one small batch of SEO experiments (10–20 pages), track it cleanly, and write down what you learned. Then repeat. That’s how you build compounding results with SEO split testing—without risking your traffic.

External references used as guardrails for safe SEO experiments: SEO Starter Guide, Title links, Search Console, Sitemaps, Structured data intro.